Build 35

User Validation

User validation can be broken into two equally valuable buckets. The more formal ergonomics and usability testing seek to ensure that a design and execution are comfortable and intuitive to users. Less formally, a walk-through with users might seek to gather their feelings and thoughts as they experience a product or service. While usability testing might reveal that low-contrast buttons are affecting a user’s ability to complete a goal, less quantitative approaches could reveal that users feel a color palette is too institutional for an in-home experience.

Because users don’t have a stake in the product’s success, they provide fresh perspectives and insights. When user validation is conducted before a product’s launch, the input can help to prioritize features, refine executions, and create an experience with broader appeal. Perhaps most importantly, it mitigates the risk of shipping a product or service with embarrassing mistakes or oversights. By regularly integrating user validation into the design and implementation processes, a team ensures fewer surprises and dramatic changes in direction.

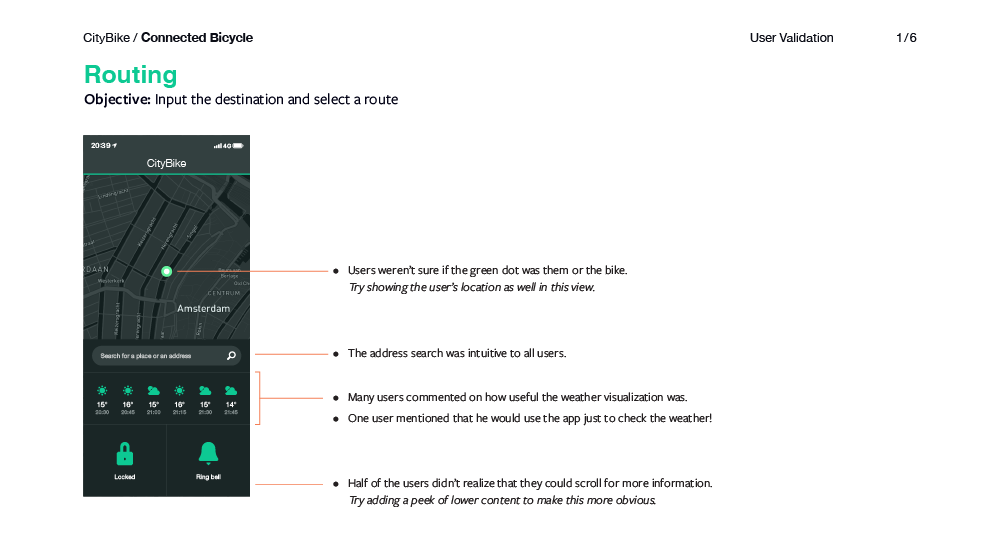

Buy from Amazon Buy ElsewhereCityBike

Connected Bicycle

For every sprint, CityBike conducted facilitated walk-through sessions using a prototype of the app to ensure that it was intuitive even to new users. The report documents areas for improvement along with suggestions.

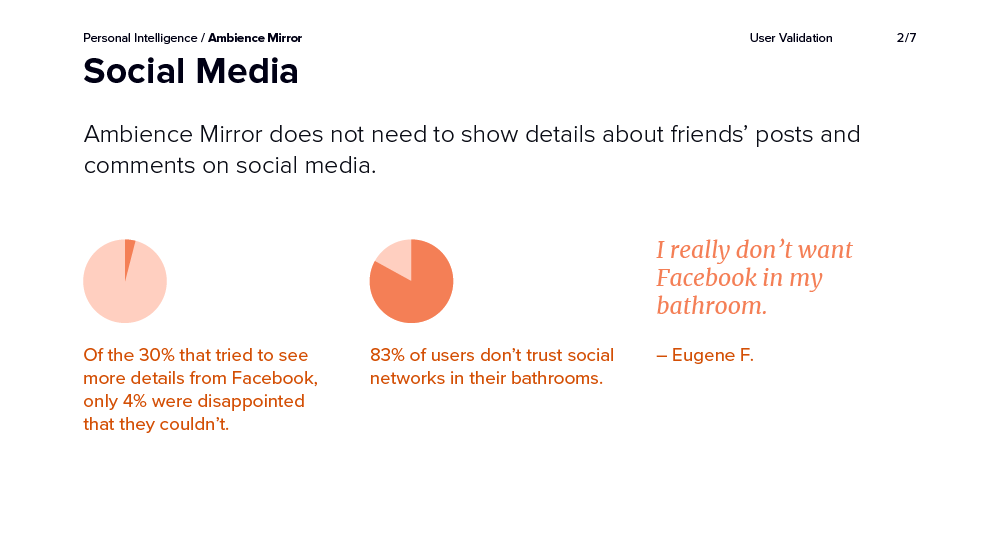

Personal Intelligence

Ambience Mirror

Ambience Mirror used the Wizard of Oz approach to provide users with a realistic experience. The report documents outcomes, providing confidence that the final product should focus on information over interaction.

Omniscient

Observations Suite

Omniscient uses alpha and beta testing to continually test new builds of Observations Suite. Each release focuses on a specific set of features, and feedback is given via forums. A community manager works with design to review and consolidate recommendations for future releases.

Related Chapters

25

Mock-Ups

How does our product or service look, feel, and sound? How do we communicate this simply to stakeholders?

31

Prototype

What type of prototype should we build? How do we get realistic feedback from users?

33

Design Assurance

How do we ensure quality through execution? What should we expect when collaborating with implementers?

36

Design Backlog

Where do we put ideas and features that we don't have time or budget for?